The 100-Kilowatt Question

The question isn’t if AI scales anymore. It’s whether the grid survives the attempt.

When Nvidia CEO Jensen Huang took the CES stage this month, he didn’t just hold up a faster chip. He offered a lifeline to an infrastructure running on fumes. The “Vera Rubin” platform, slated for release later this year, promises to break the lockstep link between AI growth and linear energy consumption. It is a critical decoupling; utility providers are already signaling they cannot keep pace.

We have arrived at the “2026 Energy Cliff.” With data center electricity demand on track to double between 2022 and 2026, the industry has hit a physical ceiling. Nvidia’s Rubin isn’t merely a Blackwell successor; it is a calculated pivot to keep the industry moving when the wattage runs out. By integrating the new “Vera” CPU with the Rubin GPU and upgraded networking, Nvidia claims a 10x reduction in energy cost per generated token.

The Architecture of Efficiency

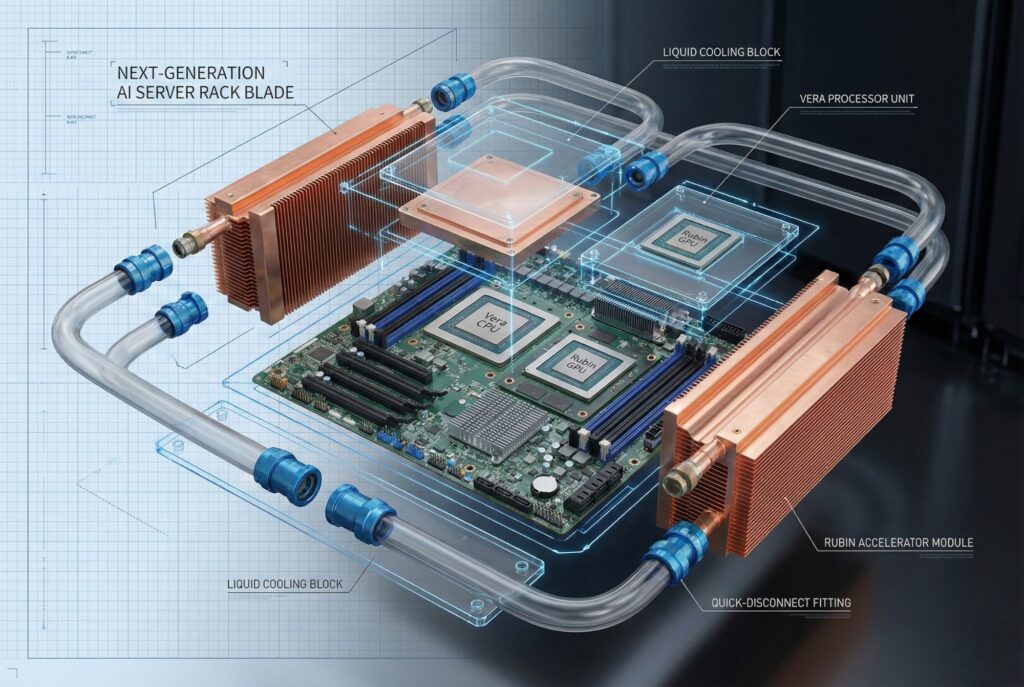

Rubin abandons simple component upgrades for “extreme codesign.” Nvidia stopped trying to just jam more transistors onto a die and instead re-architected the entire data center rack as a single computing unit. The system relies on six distinct chips: the Rubin GPU, Vera CPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and the Spectrum-6 Ethernet Switch.

The Rubin GPU, fabricated on TSMC’s 3nm process, naturally grabs the headlines. It utilizes High Bandwidth Memory 4 (HBM4) to deliver 22 TB/s of bandwidth—nearly triple the previous generation. This bandwidth is non-negotiable for “agentic AI,” where models must reason across millions of context tokens instantly.

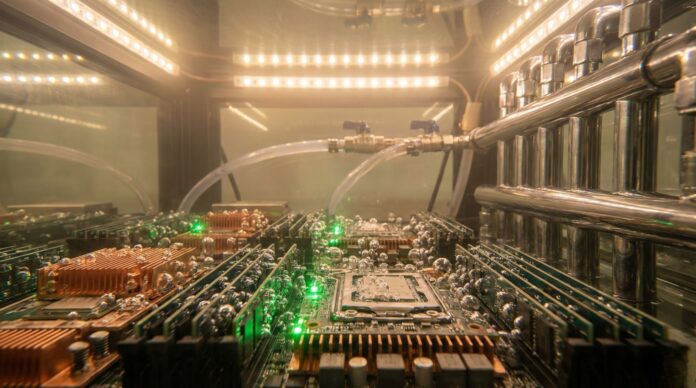

But the real efficiency driver is the Vera CPU. Built on custom “Olympus” Arm cores, Vera handles the data-heavy logistics that used to bog down the GPU. It frees the power-hungry graphics cores to focus purely on math. This division of labor allows the Rubin platform to maintain a rack power envelope similar to the NVL72 (~120kW) while drastically increasing throughput. Crucially, Nvidia’s “liquid-first” design eliminates energy-intensive air chillers, allowing the secondary water loop to run at a warm 45°C (113°F).

The 2026 Energy Cliff Explained

The “Energy Cliff” is the collision of three market forces hitting right now:

- Grid Saturation: Major hubs like Northern Virginia and Ireland have effectively run out of spare transmission capacity.

- Inference Scale: As models move from training to deployment, “always-on” power demand skyrockets.

- Hardware Density: Traditional air-cooled racks capped out at 20kW. AI racks now push 100kW+, rendering old facility designs obsolete.

The International Energy Agency (IEA) projects data centers could consume over 1,000 TWh by the end of 2026—roughly the entire electricity consumption of Japan. Hardware efficiency is no longer a “green” bonus; it is an operational permit. If hardware doesn’t get leaner, new construction halts. There are no interconnects left.

Rubin’s job is to flatten this curve. By cutting token costs by a factor of ten, Nvidia argues operators can scale output without a proportional spike in their grid draw.

Spec Comparison: The Generational Leap

The following table outlines the architectural progression from Hopper to Rubin, highlighting the aggressive push toward memory bandwidth and density.

| Feature | Nvidia H100 (Hopper) | Nvidia B200 (Blackwell) | Nvidia R-Series (Rubin) |

|---|---|---|---|

| Architecture Year | 2022 | 2024 | 2026 |

| Process Node | 4nm TSMC | 4NP TSMC | 3nm TSMC |

| Memory Tech | HBM3 | HBM3e | HBM4 |

| Memory Bandwidth | 3.35 TB/s | 8 TB/s | 22 TB/s |

| Rack TDP | N/A | ~120 kW NVL72 | ~120 kW (est.) |

| Inference | N/A | ~20 PFLOPS FP4 | ~3.6 EFLOPS Rack, FP4 |

| Interconnect | NVLink 4 | NVLink 5 | NVLink 6 |

Note: Performance metrics for Rubin represent rack-scale performance for the NVL144/NVL72 configurations compared to individual or smaller cluster metrics for previous generations.

The “Utility Era” of AI

Rubin marks the end of the hype cycle and the start of the “utility era.” In the hype cycle, raw performance was the only metric. In the utility era, the economics of operation—specifically performance-per-watt—pick the winners.

The NVLink 6 switch is vital here, allowing up to 576 GPUs to function as a single logical brain. Massive parallelism reduces the time GPUs spend idling while waiting for data—a massive source of energy waste in large clusters. Simultaneously, the Spectrum-X Ethernet Photonics switch delivers a reported 5x improvement in networking power efficiency.

Physics, however, remains stubborn. While Rubin is efficient, a full-scale “Rubin Ultra” installation (expected in 2027) could see rack densities climb toward 600kW. This forces operators to abandon legacy infrastructure entirely. We will likely see an accelerated shift toward on-site power generation—small modular nuclear reactors (SMRs) or hydrogen fuel cells—to bypass the constrained public grid.

Looking Ahead

The Vera Rubin platform buys us time. It is a temporary reprieve for an industry colliding with thermodynamics, a pause button while the energy sector attempts to catch up to the appetite of digital intelligence.

As 2026 progresses, the success of the AI revolution won’t be measured in FLOPS. It will be measured in megawatts. The battle for AI supremacy has shifted fronts. It is no longer a code war; it is a grid war.