Seven hundred billion dollars. That is what major technology firms plan to spend on capital expenditures in 2026. It is a historic surge, driven almost exclusively by the race for artificial intelligence. Amazon, Microsoft, Alphabet (Google), and Meta have effectively told investors that the era of fiscal tightening is dead. In its place? An infrastructure buildout rivaling the GDP of mid-sized nations.

The checkbook is open for Nvidia GPUs, custom silicon, and power-hungry data centers. But Wall Street isn’t exactly cheering. While executives argue that not spending is an existential threat, a massive gap remains. On one side, hundreds of billions are flowing out the door. On the other, current AI revenue looks like a rounding error in comparison. It is a standoff: Silicon Valley’s long-term vision versus the market’s demand for right-now profits.

The Scale of the Spend

2026 isn’t just an outlier; it is a departure from reality as we knew it. In the past, capital expenditures moved in lockstep with revenue. You make more, you spend more. Not anymore. Today, CapEx is decoupling from immediate earnings, expanding at rates that would get a CEO fired in any other industry.

Analysts peg the “hyperscalers”—Amazon, Google, Microsoft, Meta, and Oracle—to drop over $600 billion this year. Some estimates even push that figure toward $700 billion. And let’s be clear: this isn’t for office chairs or warehouse robots. It is money funneled directly into AI infrastructure.

Amazon leads the pack. They are eyeing a ~$200 billion spend—a figure that includes logistics but leans heavily on AWS. Alphabet is right behind them ($175–185 billion). Then there is Meta. Once just a social media giant with light infrastructure needs, they are forecasting up to $135 billion. That is nearly double their 2025 outlay.

2025 vs. 2026 Projected Capital Expenditure

| Company | 2025 Est. CapEx (Billions) | 2026 Proj. CapEx (Billions) | Primary Investment Focus |

|---|---|---|---|

| Amazon | ~$131 | ~$200 | AWS Data Centers, Custom Chips (Trainium) |

| Alphabet | ~$90–100 | ~$175–185 | TPUs, Data Centers, Energy |

| Meta | ~$72 | ~$135 | Llama Training Clusters, Custom Silicon |

| Microsoft | ~$80 | ~$116–120 | Azure AI, OpenAI Partnership Infrastructure |

Source: Market estimates and company earnings guidance (Feb 2026).

Hardware and Energy: Where the Money Goes

Compute power is scarce. It is also astronomically expensive. Nvidia’s H100 and Blackwell chips remain the industry standard, commanding tens of thousands of dollars per unit. You don’t just buy one. A single training cluster for a frontier model demands thousands of them. Suddenly, hardware costs for a single facility hit the billions.

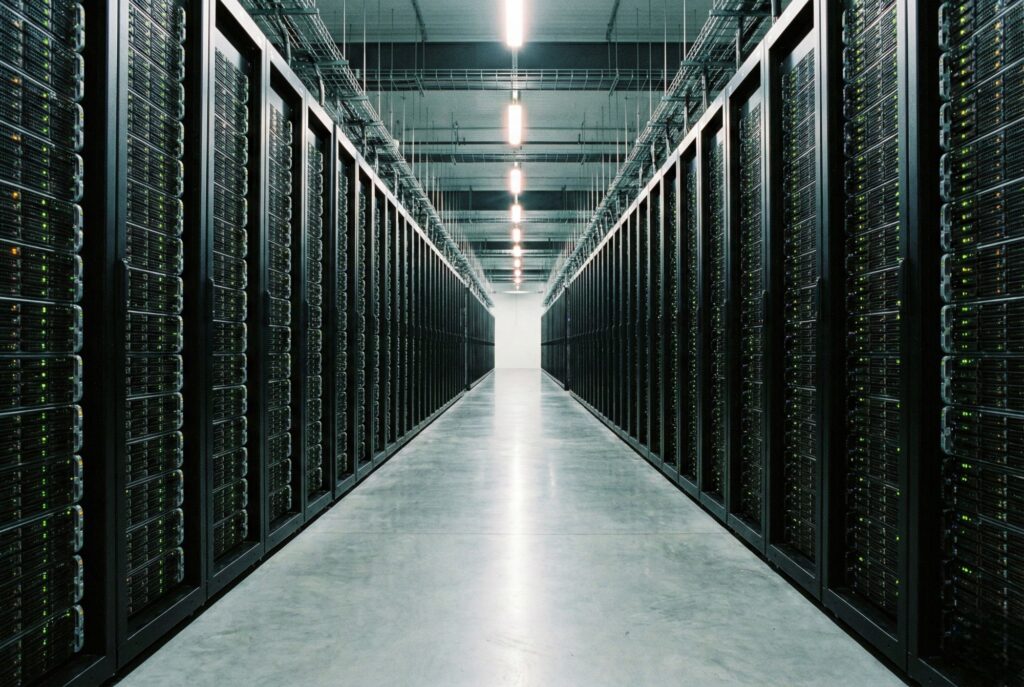

But the “burning money” narrative goes deeper than silicon. You have to house these chips. You have to cool them. Data centers are getting physically larger and more complex, requiring advanced liquid cooling just to keep the hardware from melting down.

Then there is the power bill. Tech giants aren’t just buying electricity anymore; they are funding its generation. Look at Microsoft restarting the Three Mile Island nuclear reactor. Look at similar nuclear deals from Amazon and Google. They are chasing 24/7 baseload power. These aren’t simple utility bills—they are long-term energy contracts adding billions to future liabilities.

The “Existential Risk” Defense

Press an executive on why they are spending so much, and you get the same answer: Under-investing is suicide. Over-spending is just a risk. They view this as a platform shift, the same magnitude as the move to mobile or cloud. Control the dominant AI model, and you dictate the tech landscape for a decade.

Sundar Pichai and Mark Zuckerberg have been explicit here. Even if the spending looks excessive now, they argue it is necessary to avoid obsolescence. Their logic? GPU capacity is fungible. If internal demand for AI training softens, they can just rent the compute power to enterprise customers.

It is a comforting thought, assuming demand catches up to supply. Right now, the math is lopsided. The infrastructure buildout is valued in the trillions. The actual AI revenue—Copilot subscriptions, smarter ads—is a fraction of that.

Wall Street’s Patience Wears Thin

Investors usually give Big Tech a long leash. But 2026 forecasts are choking that goodwill. When these CapEx figures dropped in early February, Amazon and Microsoft stocks corrected sharply. The market isn’t just worried about the total spend; they are worried about the clock.

Data center assets rot. A server bought today might be scrap metal in four years. If these $100 billion investments don’t generate fat margins within that window, depreciation costs will hammer future earnings per share (EPS).

Goldman Sachs and others have flagged the danger. For these investments to break even, the AI software market needs to expand at an unprecedented rate. If the “killer app” doesn’t show up soon, these companies will be left owning the most expensive server farms in history—with no one to rent them.

Impact and Outlook

This aggressive spending is reshaping the global economy. It is a gold rush for semiconductor fabs, construction crews, and power plants. But it is straining the balance sheets of the world’s most valuable companies.

We are watching a high-stakes decoupling. Investment has left immediate return in the dust. For the rest of 2026, forget the technical benchmarks. The only metric that matters is the “Revenue to CapEx” ratio. Big Tech has to prove these billions are generating real dollars, not just hype. If they can’t? The tension between visionary ambition and shareholder reality is going to snap.