The experimental chat interfaces of 2024 are dead. In their place? Autonomous, agentic systems that run the show. Artificial intelligence in 2026 isn’t just generating text; it is executing work. This shift has forced a massive capital restructuring across the tech sector. U.S. cloud providers are pouring over $600 billion into infrastructure this year alone—not for “smarter” chatbots, but to feed the energy-hungry action models defining this new era.

Reliability now trumps raw capability. With OpenAI’s GPT-5 arriving late last year and Google’s Gemini 3.0 series launching weeks ago, the frontier moved from generation to reasoning. But it’s not smooth sailing. The EU AI Act is biting into deployment strategies, and we are seeing a “productivity paradox.” Companies are stalling. They’re integrating complex systems, and for now, it’s slowing them down.

The Rise of Agentic AI

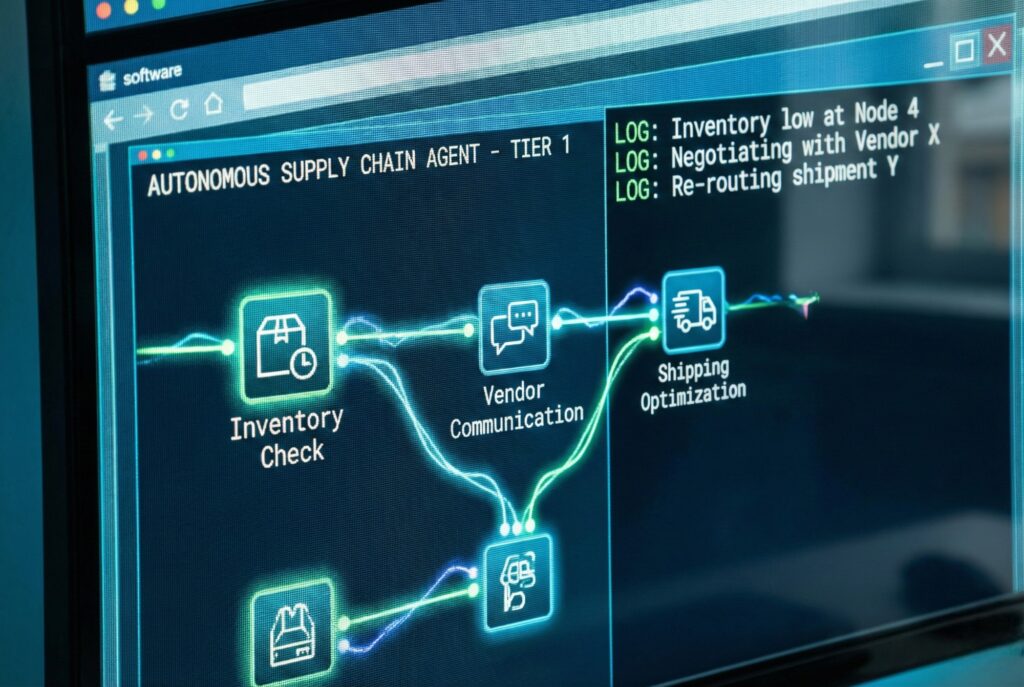

We used to talk about Large Language Models (LLMs). Now, it’s about Large Action Models (LAMs). The difference is stark. Yesterday’s AI was a consultant—it gave advice. Today’s AI, like Claude 4.5 or GPT-5, is an employee. These agents have memory. They self-correct. They handle tasks that span days, not seconds.

Data from early 2026 shows 79% of enterprises use AI agents in at least one function. They aren’t just chatting; they’re managing supply chain logistics, debugging code, and closing Tier-1 support tickets. The bottleneck isn’t intelligence anymore. It’s “agent interoperability”—getting a Salesforce agent to communicate with a Microsoft 365 agent without breaking the chain.

Hardware: The Rubin Era and Energy Constraints

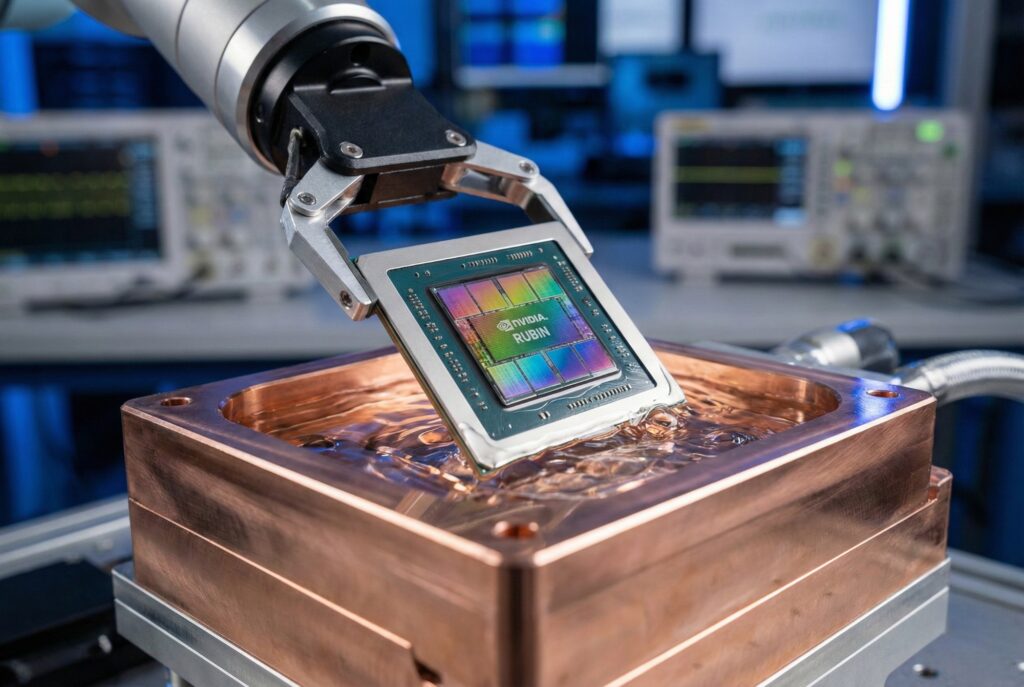

NVIDIA still sets the pace. Their Rubin platform, entering mass production in Q1 2026, has moved on from the Blackwell architecture. Speed isn’t the only metric anymore; efficiency is. Rubin targets the specific, heavy workloads of agentic AI—specifically long-horizon reasoning and mixture-of-experts (MoE) training.

But physics is pushing back. The sheer density of Rubin chips is forcing data centers to tear up their cooling schematics. The market is splitting because of it. Hyperscalers like Amazon and Google are building nuclear-backed “AI factories” to run frontier models. Meanwhile, “Edge AI” is having a moment. Apple and Qualcomm are shipping silicon that runs quantized 2025-era models right on your device. The cloud isn’t the only player now.

Comparative Analysis: The Frontier Models of 2026

The field has narrowed. Three competitors remain at the frontier, each staking a claim on a different philosophy. Here is how the state-of-the-art stacks up for developers and enterprises in January 2026.

| Feature | OpenAI GPT-5 | Google Gemini 3.0 Pro | Anthropic Claude Opus 4.5 |

|---|---|---|---|

| Release Window | Aug 2025 | Dec 2025 / Jan 2026 | Nov 2025 |

| Primary Strength | General Reasoning & Multi-modality | Deep Integration & Speed | Coding & Complex Logic |

| Context Window | 1 Million Tokens | 2 Million+ Tokens | 500k Tokens (High Fidelity) |

| Agentic Capability | Native “Operator” Mode | Deep Think / Agent Tools | Self-Correcting Workflows |

| Key Innovation | New Persistent Memory across sessions | New Dynamic Video/Image reasoning | New Lowest Hallucination Rate |

Regulation and the “Splinternet”

The EU AI Act is fully alive, and it hurts. 2026 marks the first year of full enforcement, and U.S. companies have had to fork their strategies. If you deploy “high-risk” AI in Europe, you need audit trails that aren’t required in Asia or the States. The result? A “Splinternet.” A user in Berlin gets a compliant, slightly hobbled model; a user in San Francisco gets the full, messy version.

The geopolitical gap is widening, too. U.S. export controls on chips remain tight. Yet, China’s domestic sector refuses to fold. Models like DeepSeek prove that efficient coding can fight back against raw hardware deficits, keeping Chinese tech giants dangerous in the application layer even if they lag in raw training compute.

The Workforce and Productivity Paradox

Adoption is high. The economic payout? That’s complicated. Economists are seeing a “J-curve.” Firms plug in agentic AI and productivity actually drops for 3 to 6 months. Workflows break. Staff have to learn how to audit machines rather than do the work themselves.

The labor market is recalibrating, and it’s brutal. Demand for entry-level knowledge work—copywriting, basic coding, data entry—is softening. Hard. Conversely, everyone wants an “AI Orchestrator,” someone who knows how to keep autonomous agents on the rails. And with “AI Slop” drowning the open web, verified human data is suddenly a premium asset. Real human interaction has never been more expensive.

Forward Outlook

The hype cycle is over. We are in the industrialization phase now. The rest of 2026 isn’t about chasing bigger parameters; it’s about cost and power. We have to make inference cheaper, and we have to keep the lights on. The winners won’t be the companies with the coolest demos. They’ll be the ones who can actually govern—and power—their digital workforce.