Big Tech is currently betting the equivalent of a small nation’s GDP on a single premise: that artificial intelligence is the new electrical grid, not just another feature update. In a synchronized capital injection that makes the Manhattan Project look like a budget rounding error, Microsoft, Google, Amazon, and Meta are pouring over $300 billion annually into custom silicon and power infrastructure.

This isn’t just R&D. It is a physical transformation of the economy.

Wall Street is polarized. Investors are forced to decide if we are witnessing the construction of a new digital civilization or the most expensive financial hallucination in history. The “who” and “why” are obvious—fear of obsolescence drives the checkbook. The “what next,” however, is murky. While infrastructure spending skyrockets, the consumer applications generating tangible revenue—beyond chatbots and coding assistants—are lagging. As 2026 looms, the industry faces a critical reckoning: will these billions yield a new internet, or just a surplus of idle compute?

The Scale of the Spend: Anatomy of a $300B Bet

To grasp the sheer scale of this cycle, look at the Capital Expenditure (CapEx). In 2024, the “Hyperscalers”—Amazon AWS, Microsoft Azure, Google Cloud, and Meta—pushed their combined CapEx north of $200 billion. Projections for 2025 sit comfortably between $300–$400 billion.

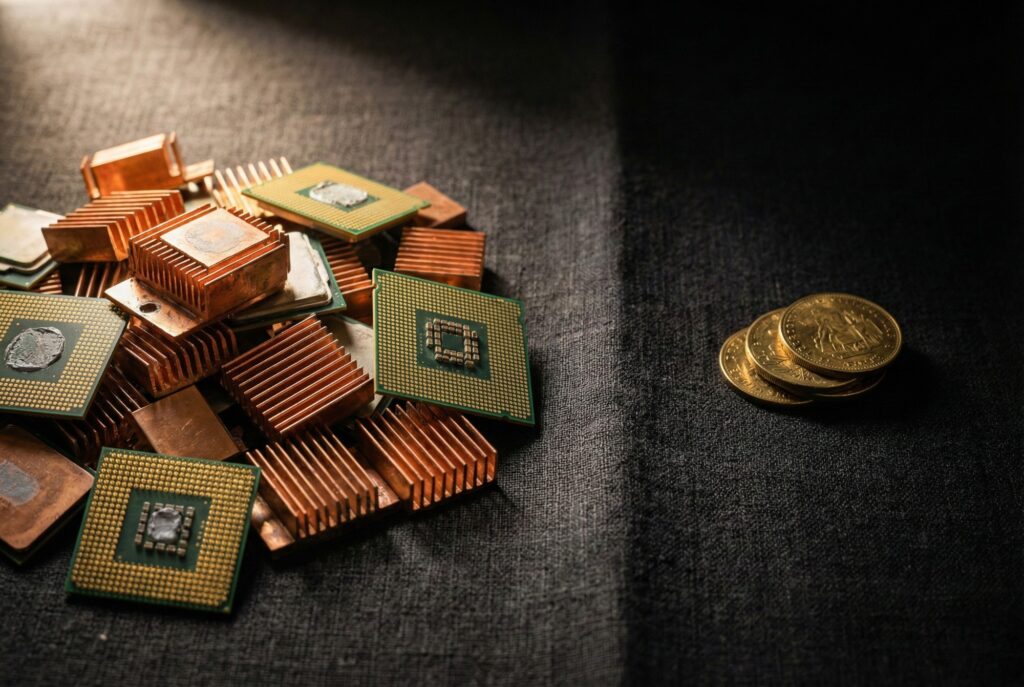

This cash isn’t vanishing into software development. It flows directly into physical assets:

- Data Centers: These aren’t just server rooms; they are sprawling concrete shells consuming acreage across Virginia, Ohio, and international hubs.

- Compute: Companies are hoarding hundreds of thousands of GPUs—primarily from Nvidia—at $30,000 a pop.

- Energy: Gigawatt-scale investments in nuclear and renewable energy are being signed to power grids that are already groaning under the load.

Analysts call this “defensive spending.” For Google, failing to deploy adequate compute for Gemini is an existential threat to Search. For Microsoft, the OpenAI partnership demands infrastructure capable of serving the entire enterprise world. The logic is brutal but simple: it is cheaper to overbuild and waste capacity than to underbuild and miss the platform shift entirely.

The Bear Case: The “Field of Dreams” Problem

Skeptics call it the “Field of Dreams” fallacy: if you build it, they will come. But will they pay?

David Cahn of Sequoia Capital articulated the “$600B Question,” asking where the revenue will come from to justify the infrastructure costs. The math is unforgiving. To pay back hundreds of billions in GPU investment, AI software must generate equivalent high-margin revenue. Right now, aside from ChatGPT and Github Copilot, few consumer AI products have achieved the “stickiness” of streaming services or social media.

Then there is the Depreciation Bomb. Fiber optic cables laid in the 90s could last decades. AI chips cannot. An H100 GPU has a shelf life of 3–5 years before it becomes obsolete. If that $30,000 chip doesn’t generate a return quickly, it becomes e-waste, not a long-term asset. If the “killer apps” don’t arrive before the hardware needs replacing, the balance sheet write-downs will be catastrophic.

The Bull Case: The “New Utilities” Argument

Bulls see it differently. They aren’t looking at quarterly P&L; they are looking at history. They compare this buildout to the electrification of the 20th century or the laying of the internet backbone.

In this view, GPU clusters are the new utility companies. You had to lay the trans-Atlantic cables before you could invent Netflix. The “Intelligence Grid” must be built before agents can autonomously book travel, cure diseases, or manage supply chains.

Key differentiators from the Dot-Com Bubble:

- Solvency: In 2000, companies with zero revenue spent money they didn’t have (debt). Today, Microsoft and Google are spending cash they already earned.

- Immediate Utility: Unlike the “pet food online” startups of 1999, Generative AI already provides demonstrable value in coding and content creation.

- The Jevons Paradox: History suggests that as compute becomes cheaper and more abundant, demand for it will explode, not contract.

Data Analysis: The Great Divergence

Here is the disparity in black and white. The table below compares the massive capital laid out by tech giants against the current estimated revenue attributed strictly to AI services (excluding legacy cloud growth).

| Company | Est. 2025 AI CapEx | Primary Investment Focus | Reported/Est. AI Revenue (Annualized) | The “Gap” Status |

|---|---|---|---|---|

| Microsoft | ~$80B+ | OpenAI infrastructure, Azure AI | ~$10B+ | Narrowing (Strong enterprise adoption) |

| Amazon | ~$100B+ | AWS data centers, Custom Silicon | ~$3-5B | Wide (Heavy infra play, catching up in apps) |

| ~$75B+ | TPU clusters, DeepMind integration | Undisclosed (Integrated into Search/Cloud) | Ambiguous (Defensive spend vs. Search revenue) | |

| Meta | ~$60B+ | Llama training clusters | ~$0 (Directly) | Strategic (Ad optimization & open source moat) |

Note: Figures are based on analyst estimates and recent earnings guidance for FY2025. “AI Revenue” separates specific GenAI products from general cloud storage or compute revenue.

The Physical Reality Check

Forget the money for a moment. The $300B bet has a bigger enemy: physics.

The digital world is colliding with real-world constraints. Data centers now compete with residential neighborhoods for electricity. In Northern Virginia and Ireland, power grids are hitting capacity. This has forced companies into unconventional corners—Microsoft restarting the Three Mile Island nuclear plant; Amazon buying nuclear-powered campuses.

The shortage of transformers, cooling water, and skilled labor suggests that even with an unlimited budget, you cannot simply “buy” a new internet overnight. This physical bottleneck might actually be the industry’s saving grace. If power constraints force a slowdown in deployment, it buys time for software development to catch up, preventing a total supply-glut collapse.

What Comes Next?

We are entering the “Digestive Phase.” The panic-buying of GPUs will likely plateau in late 2026 as shareholders demand to see margins. The winners of the next cycle won’t necessarily be those who spent the most, but those who can convert this raw “intelligence” into invisible, indispensable utility.

The $300 billion spent so far has successfully built the tracks. Now, the world is waiting for the trains.