It used to be bad grammar that gave them away. Now, the scammers sound exactly like your boyfriend.

Financial fraud linked to AI voice cloning has hit critical mass this Valentine’s Day, marking a terrifying pivot in online crime. Security firms and federal agencies are sounding the alarm: criminal syndicates have swapped stolen photos for synthesized audio, using accessible AI tools to impersonate partners with chilling accuracy. This isn’t just a tech upgrade—it’s an extinction event for traditional detection methods. Victims in the US and UK reportedly lost over $1.5 billion to relationship fraud in 2025 alone because the voice on the other end of the line sounded real.

We have moved past simple “catfishing.” Welcome to the era of “vishing” (voice phishing). Operating largely out of Southeast Asia and Eastern Europe, fraud rings are using these tools to bypass the natural skepticism of digital daters. They harvest audio clips from social media—or generate entirely new personas—to engage targets in real-time. By mimicking vocal inflections and breathing patterns, they build trust instantly. It creates an emotional foothold that turns a skeptical match into a victim willing to liquidate life savings for a “medical emergency” or a “sure-fire” crypto investment.

The Evolution of “Sweetheart Swindles”

Historically, romance fraud was text-heavy. Perpetrators hid behind keyboards, crafting elaborate fictions about working on oil rigs or being deployed in the military to dodge video calls. The red flags were obvious: broken English, copy-pasted poetry, and a stubborn refusal to meet face-to-face.

2025 killed that dynamic.

The commoditization of generative AI means high-fidelity voice cloning is no longer the domain of Hollywood studios. It is available to anyone with a $20 software subscription.

From Text to Audio

Modern scammers don’t hide; they actively push for voice interaction. Utilizing Text-to-Speech (TTS) engines trained on vast human datasets, they generate audio that captures nuance—pauses, sighs, laughs.

A Norton report from early 2026 highlighted a grim statistic: nearly half of American online daters have been targeted by scams involving synthetic media. The barrier to entry is virtually non-existent. A scammer needs only three seconds of a person’s voice to create a clone. In romance scenarios, they often use a “persona” voice—a generated identity that remains consistent across months of phone calls, effectively masking the criminal team working the script.

Anatomy of a Deepfake Deception

The methodology is precise. It follows a psychological script designed to exploit loneliness, accelerated by machine learning.

The Setup and Grooming

It starts on mainstream platforms—Instagram, LinkedIn, Tinder. The profile is polished, often vetted by AI to appear attractive yet attainable. Once a match happens, the scammer immediately pivots to encrypted messaging apps like WhatsApp or Telegram. The goal? Get the victim away from the dating app’s safety algorithms.

The “Proof” Phase

Here is where the deepfake is deployed. To prove they are “real,” the scammer sends voice notes. These aren’t generic greetings. They are specific responses to questions you asked ten minutes ago.

- The Morning Voice Note: A warm, personalized audio message wishing the victim a good day, referencing specific details discussed the night before.

- The Live Call: Using real-time voice changers, the scammer answers the phone. While there may be a slight delay (latency) as the AI processes the voice, it is easily explained away as a “bad connection.”

The Crisis

The bond cements. AI helps here too, allowing scammers to manage multiple victims simultaneously with perfect recall of names and backstories. Then, the crisis hits. They are detained at customs. A medical emergency. Frozen assets. The panic in their voice sounds genuine because the software understands inflection. Because the victim “knows” the voice, the request for funds feels urgent, not suspicious.

The Financial Toll: A Year-over-Year Comparison

The introduction of AI has spiked both the volume and success rate of these frauds. The table below illustrates the escalation of romance scams from 2024 to projected figures for 2026.

| Metric | Traditional Romance Scams (2024) | AI-Enabled Romance Scams (2026 Est.) |

|---|---|---|

| Primary Method | Text messages, stolen photos | Deepfake voice, real-time video, cloned audio |

| Avg. Loss per Victim | $4,500 | $12,000+ |

| Target Demographics | 50+ divorced/widowed | 18-35 (Gen Z) and 60+ |

| Time to Conversion | 3–6 months | 2–4 weeks |

| Success Rate | ~15% of targets engaged | ~40% of targets engaged |

Data aggregated from 2025 cybersecurity reports and financial fraud trackers.

The sharp rise in losses is largely attributed to “Pig Butchering” schemes. The deepfake voice isn’t just begging for cash; it is convincing the victim to invest in a fake cryptocurrency platform. The audio establishes the credibility needed to authorize massive transfers.

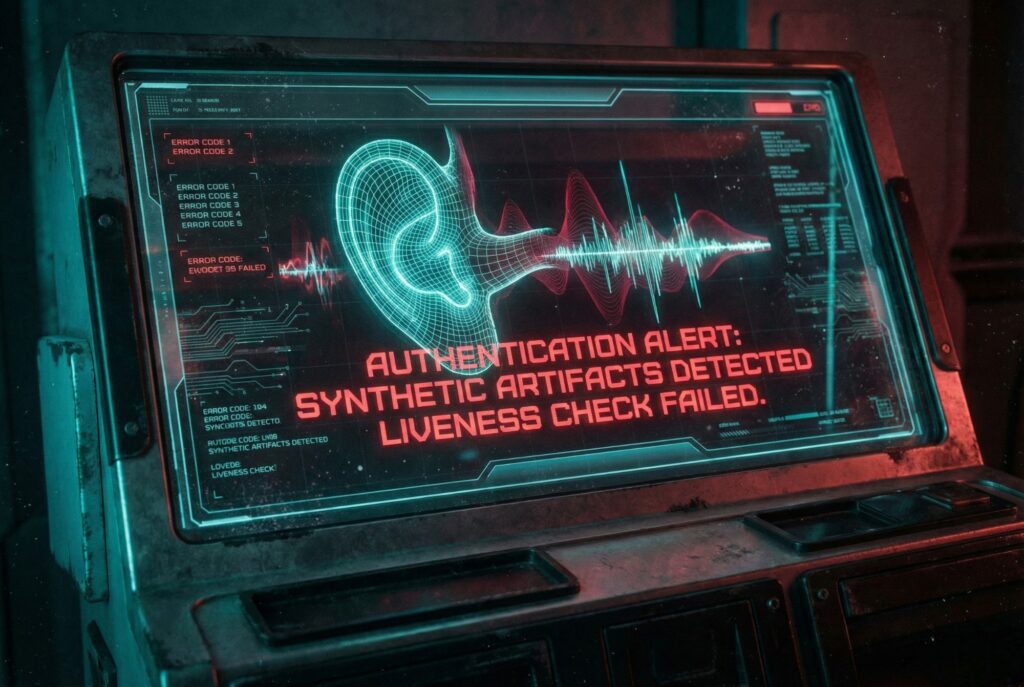

Detecting the Undetectable

Distinguishing human from machine is getting harder. But the tech isn’t perfect. Not yet. Attentive listeners can still spot the cracks in the facade.

Auditory Artifacts

AI models struggle with the non-verbal messiness of human speech.

- Lack of Emotion: The words may scream urgency, but the tone might remain flat or strangely consistent.

- Clipping and Static: Listen for unnatural “cuts” between words—a digital stutter—or a metallic, robotic undertone at the end of sentences.

- Unnatural Pacing: AI may rush through sentences without taking a breath or pause for too long in the middle of a phrase.

Verification Techniques

Cybersecurity experts recommend aggressive verification strategies:

- The “Safe Word” Test: Ask the person to say a specific, random phrase during a live call. AI can generate this, but the latency—the hesitation required to type the prompt into the generator—is a tell.

- Interrupt Frequently: AI voice changers often malfunction when users talk over each other. Interrupting the speaker can cause the software to glitch, revealing the scammer’s real voice underneath.

- Reverse Search Everything: While reverse image search is standard, new tools allow users to analyze audio files for signs of synthesis. Use them.

Industry and Regulatory Response

The surge in AI fraud has forced the hand of tech giants and banks. Dating platforms like Tinder and Hinge are integrating third-party ID verification systems requiring live video selfies, which are harder to spoof than static images.

Financial institutions in the UK and Australia have implemented “friction” measures—deliberately delaying payments that match scam patterns and flashing warnings when a customer attempts to transfer money to a new payee. In the US, the FTC has ramped up warnings, specifically highlighting the use of voice cloning in family emergency scams.

But regulation lags behind innovation. “The tools available to fraudsters are enterprise-grade,” notes a cybersecurity analyst from the 2026 Norton Insights Report. “They are running these scams like call centers, using software that rivals legitimate customer service operations.”

Summary

The romance scam landscape has shifted from simple deception to psychological warfare. Deepfake voice technology bypasses the human instinct for trust, making Valentine’s Day 2026 a period of heightened risk.

As users navigate online dating, the reliance on audio and video as “proof” of identity must end. Security lies in skepticism: verifying identities through offline channels, recognizing the subtle flaws in synthetic media, and refusing to transfer funds to online acquaintances regardless of how “real” they sound. The future of online safety depends on personal vigilance—and perhaps new authentication standards that can cryptographically prove a human is actually on the line.